The Best Place to Hide a Dead Body (How to Avoid Google’s Algorithm & Individual Bias)

- Jessica Levey

- Mar 28

- 10 min read

It’s time to go where the bodies (and information) are buried

People who work in marketing call the second page of web results “the best place to hide a dead body,” because the majority of people never click past Page One search results. But why are so many people willing to settle for what an algorithm shows them first? Shouldn't we expand our search experience and explore a richer information landscape instead?

This Page One bias isn’t entirely new behavior, of course. People have been skimming the front page of newspapers for centuries, no doubt since corantos and broadsheets hit the streets. If it doesn’t land above the fold, is it even news?

Except getting buried by The Times is an editorial decision, not an algorithmic one. There’s no wise wizard sitting behind your computer screen, sorting through what’s important and what’s not. Algorithms are driven by something much more sinister. Something much scarier than a newsroom editor (if you can imagine!).

Who creates the algorithm?

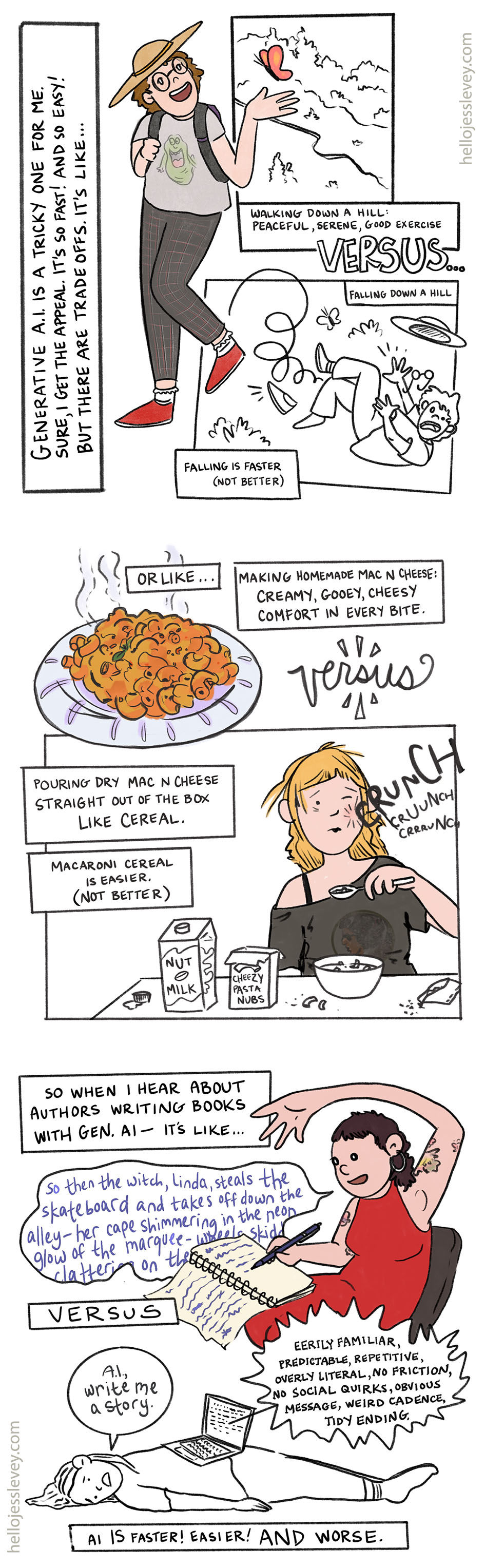

Algorithms like Google’s are created by corporations to make money. Their primary goal is increasing revenue, not sharing helpful information (even if they happen to do that too). They’re created by thousands of engineers, computer scientists, and researchers working for mega-companies who make their money off you, your data, and other mega-companies who make money off you, too. And increasingly, algorithms are powered by artificial intelligence and deep learning models like Google’s MUM (aka Multitask Unified Model).

How Google's algorithm prioritizes profit over accuracy

Google's algorithm is fine tuned to help you just enough to keep you coming back, while also maximizing search-generated income from a few specific funnels. For example, Google has mastered the use of intent-matching ads: the algorithm determines the intent behind your search and uses that information to show you personalized ads for products you're more likely to buy. This is pretty standard at first glance. Below the surface, things are anything but standard.

In fact, Google's algorithm has been designed in ways that sometimes work against the user (intentionally or not) to increase revenue at the expense of accurate information:

First, Google's AI Overviews and featured snippets now answer your questions without a single click. This "zero-click" search design cannibalizes traditional traffic and keeps you sitting on the search page longer, exposing you to more ads. Unfortunately, those overviews and snippets are notoriously inaccurate.

Related Article: Get Found by AI: LLMO & AEO Basics for Small Businesses

Nearly 1 in 5 overview responses are wrong, according to a 2025 Mashable user test (1). An in-depth study by an Italian researcher at the Università di Pavia confirms this, finding nearly 20% of overview "responses are incomplete, omitting key details or crucial aspects of the topic. These responses are often overly concise, lacking concrete examples or in-depth explanation, leaving users to conduct further research to fully understand the topic." (2) Perhaps this is a feature, not a bug; more searches mean more money for corporations. Next, there's a pretty good chance that Google is showing you inferior content on Page One, with industry rumors claiming this coerces you to search again with new terms. Each time you have to tweak your query to get results that are actually relevant, the algorithm has the opportunity to show you a new batch of personalized ads. And the more you refine your search, the more it learns about you, and the better it gets at targeting you when it's time to buy.

"The recent court documents showed that Google's internal testing demonstrated that significantly worse search results would not harm their business operations...Since Google doesn't have any real competition, it can make the best information hard to find, forcing users to stay on Google for longer and interact with more ads," WalletHub's CEO Odysseas Papadimitriou said following a recent antitrust lawsuit. "This is dangerous for consumers, most of whom think the best results appear first." (3)

Google has also made it harder to visually distinguish between paid ads and organic content. And every time you accidentally click on promoted content? Google gets paid.

The credibility gap

This is why your top search results are often promoted content.

And if you think this doesn’t matter much in the age of “digital natives" and Gen Z or Gen Alpha users who’ve been primed to distrust the internet and filter out covert marketing, you'd be wrong. Consider a 2016 Stanford History Education Group (SHEG) study that found a group of nearly 8,000 middle school students had a hard time distinguishing real news articles from promoted content, or even “identifying where information came from.” (4)

If it's on Page 1, even digital natives are more likely to believe it – whether it’s factual, valuable, or vetted information, or not.

The algorithm plus you: how existing biases enhance the echo chamber effect

Even the media skeptics among us can still be fooled when we're shown what we want or expect to see on Page One. Consider the effect of algorithm vs inclination on the media we consume – comparing what a search engine shows us vs our existing biases on what we ultimately decide to click.

A study conducted in 2022 did something relatively rare by measuring both exposure and engagement, and found that personal bias might have an even stronger impact than algorithm curation on the partisan news we consume. (5) This is (maybe?) good news for Google Search’s culpability in political manipulation...

I’d guess that algorithm plus inclination actually creates the perfect conditions for pushing Page 1 political content. Users are likely to self-select biased information, as the study shows, and when they’re only shown a limited number of results to begin with? The echo chamber is surely amplified.

Recent research further backs this up, showing that social media algorithms intended to increase engagement can significantly impact a user’s political attitudes. These researchers showed users content that contained “antidemocratic attitudes and partisan animosity” for a single week, and found that this curated stream of content “significantly increases affective polarization and negative emotions, whereas decreasing exposure reduces them.”

While Google Search’s algorithm is utility-based, not engagement-based (it isn’t programmed for engagement first, the way social media is), its curated results aren’t neutral either. Your search results are still narrowed by location, the SEO competence of the site, user engagement, and other signals, which means that misinformation can often outrank factual authority.

Getting to Page Two and beyond

So what are we to do about this? If the algorithm is curating every search for us, and we add our own biases on top, how do we keep ourselves from being spoon-fed only the information we're most likely to believe? How do we get to the information we aren't seeing?

The solution – and I think you know where I’m going here – starts with clicking to Page Two. But you can’t just dig deeper, you’ve also got to dig somewhere else.

We’ve got to relearn how to research – like really research – the topics we want to understand. We need to dig for information, compare sources, get out of our usual internet ecosystems, and critique what we find.

It starts by tapping “show more” at the bottom of our feeds, ignoring incomplete snippets and out of date AI summaries, and daring to go offline. We’ve got to remember that the draw of the world wide web was, and always has been the wealth of information waiting for us – not how much money the algorithm can pull from us, or how much influence it can exert over us.

Below are a few tips to help you get started.

How to Avoid Google's Algorithm Overlords (and Your Own Bias)

Scroll past Page One results

Factual information is often buried by lower-quality content that’s better optimized for the algorithm. Tap “Show More” on continuous scroll searches, or click forward to Page Two, Three, or Four, and explore the information that’s often “hidden” on the internet by the algorithm. Don’t trust the algorithm!

Don’t just dig deeper, dig somewhere else too

We’ve got to go where the bodies are buried – but that doesn’t just mean digging deeper by searching on Page Two, Three, and Four. We’ve also got to dig somewhere else. This means “lateral reading,” or leaving your original search engine to see what people outside your internet ecosystem are saying. Dig deep and wide to find reliable information. This is where libraries, print books, and mega-resources like WorldCat (a comprehensive network of library content) come in.

Be skeptical of your own biases when you click

Be aware of your own biases and click on results with snippets and titles that you don’t immediately agree with. Approach sources with curiosity and a critical eye, and reflect on your own values and beliefs while you read them. Let yourself feel uncomfortable, and be prepared to disagree. The internet will always have abundant misinformation and propaganda to watch out for, so it’s best to read more than one source for important information – and verify your sources!

Use a neutral rating tool to check the political leaning of your sources, and yourself

Consider using a resource like All Sides’ “Media Bias Ratings” tool to see if your preferred informational sources are simply confirming your own biases. All Sides ranks major outlets by how far left or right they lean. This can be an interesting way to see if you always self-select from liberal or conservative media sites without knowing it. Consider reading sites that fall toward the middle to test how strong your own biases are. If you find yourself especially revved up or offended by a “middle of the road” source, it could be a sign you’ve been unconsciously reinforcing your own biases online, or that your values diverge from the majority.

Turn off AI Mode when you search

This can be done by adding “–AI” to your search, or by selecting “Web” from the “More” drop down menu at the top of the search page. (See images below for how to do this.)

Consider using a private search engine as an alternative to Google Search, one that removes algorithm personalization

Popular private search engines that remove algorithm personalization include DuckDuckGo, Brave Search, Qwant, and Mojeek. Keep in mind that these search engines have their own bad practices and controversies too. (You’ll be hard pressed to find a benevolent search engine). But the results you see will be less filtered to your personal web habits than Google’s, helping you to break out of your curated information bubble.

Disable “Personalized Search” in Google and pause “Web & App Activity” tracking

Head to your Google Account. From the menu on the left, select “Data & Privacy.” Then, toggle off “Personalized Search.” This should also pause “Web & App Activity.”

Use quotation marks around your search keywords

As old fashioned as this recommendation is, using quotation marks around keywords can filter some of the slop and sponsored content from the top of your page. Putting quotation marks around a specific keyword or longtail keyword / question will force the search engine to show you only pages with that exact match.

Use the “Verbatim” search tool

Choosing “Verbatim” from the “Tools” menu at the top of the search page is helpful in the same way that quotation marks are, because it will stop Google Search from using synonyms and auto-corrections of your search query. Hover over “Tools,” then “All Results,” and select “Verbatim.” If you’re using exact terms when you search, this can reduce the number of non-relevant results you’ll need to wade through. Using “&tbs=li:1” in the search bar activates Verbatim mode, though you can just select it from the search page menu.

Manual curation using the "Site:" operator in your searches

You can curate your own search results to show the most trusted sources using “Site:” in your search query. To search government sites, for example, you’d type: “site:.gov "your search term"”. To research educational institution sites, you’d type “site:.edu “climate change"” You can also use this method to search a specific domain, for example: “site: nytimes.com "revolution"”

Use a browser extension to block unwanted sites

A browser extension like “uBlacklist” can be helpful for blocking sites like Pinterest, Quora, and more. These extensions can block results from entire domains, significantly removing pages of unreliable or unwanted results from each search. And uBlacklist is compatible with Google and DuckDuckGo, and other popular search engines, making it accessible for most users.

Use Google Scholar for in-depth and comprehensive information on factual topics

Check out peer reviewed results on Google Scholar for academic searches, in addition to reading blog posts or news articles that summarize them. Academic journal articles can be complicated to read at first, but you’ll get better at it with practice! This is the closest most of us can get to a primary source for academic topics, making it a super valuable and interesting resource. If you’re avoiding Google, consider alternatives like CORE - Open Access Research Papers, Semantic Scholar (uses AI), and PubMed Articles.

Which method will you try first?

Understand unfamiliar words in this blog post:

Corantos (also written as courants, in French): an early form of newspaper, published in England from the 1620s until the 1630s; usually printed on a single large sheet of paper in very small type set in columns

Broadsheets: another early form of newspaper, also from the 1600s (17th Century) through the 1800s, and printed on an extremely large piece of paper that was not folded. These early newspapers contained official government messages and other serious news.

Editorial vs Algorithmic comparison: Editorial: decided by human judgment

Algorithmic: decided by automated systems that use programmed rules and data

Echo chamber: an environment where you mostly hear views that are similar to yours, and that don't challenge or contradict your current values and beliefs.

Note: Echo chambers are different than communities because they're closed systems; communities are formed over shared values but encourage conversation and dialogue, diverging opinions, and diverse opinions.

Lateral reading: leaving one information source to check others and determine how credible or current your info is.

Partisan: aligned with a specific political party or political movement. This information can be accurate, but it represents a single or specific viewpoint, often polarizing when political parties disagree on an issue.